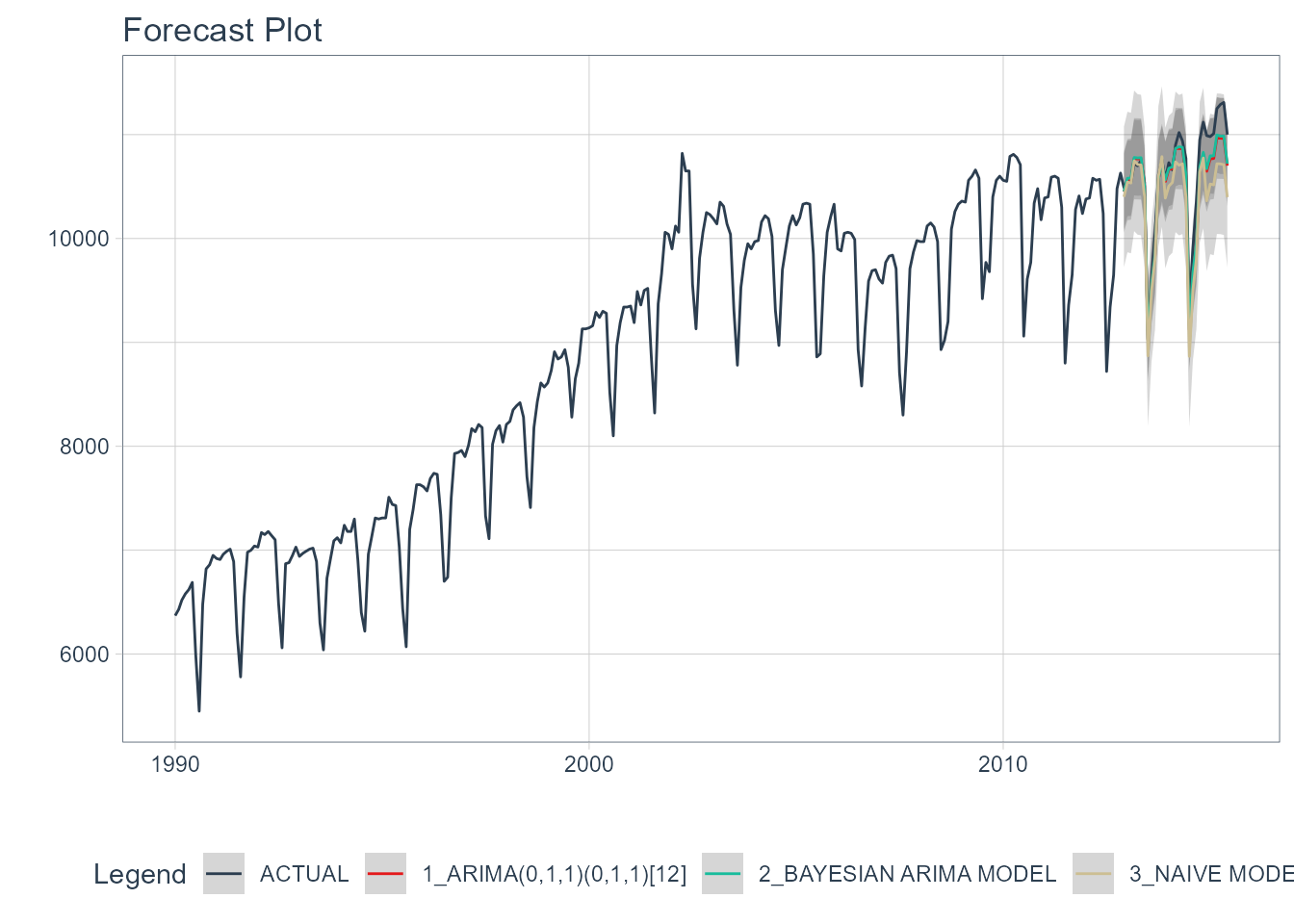

The crucial point is that MAPE puts much more weight on extreme values and positive errors, which makes MASE a favor metrics. The Mean Absolute Percentage Error - MAPE, measures the difference of forecast errors and divides it by the actual observation value. When MASE > 1, that means the model needs a lot of improvement.

An MASE = 0.5, means that our model has doubled the prediction accuracy. When he have a MASE = 1, that means the model is exactly as good as just picking the last observation. We have also the Mean Absolute Scaled Error - MASE that measure the forecast error compared to the error of a naive forecast. MAE is the mean of all differences between actual and forecaset absolute value and in order to avoid negative values we can use RMSE. The simplest way to m ake a comparison is via scale dependent error because all the models need to be on the same scale using the Mean Absolute Error - MAE and the Root Mean Squared Error - RMSE. We start about how to compare different time seris models against each other.įorecast Accuracy It determine how much difference thare is between the actual value and the forecast for the value.

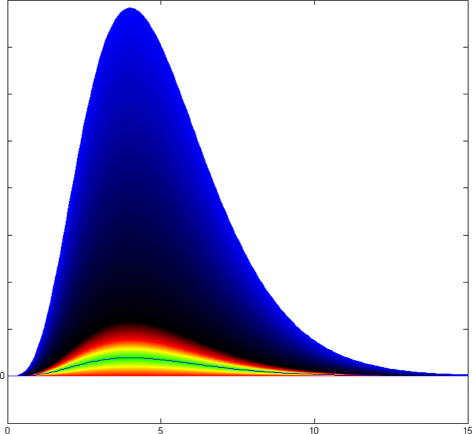

In this post we will review the statistical background for time series analysis and forecasting.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed